A conceptual affective design framework for the use of emotions in computer game design

Vol.9,No.3(2015)

Special issue: Experience and Benefits of Game Playing

The purpose of this strategy of inquiry is to understand how emotions influence gameplay and to review contemporary techniques to design for them in the aim of devising a model that brings current disparate parts of the game design process together. Emotions sit at the heart of a game player’s level of engagement. They are evoked across many of the components that facilitate gameplay including the interface, the player’s avatar, non-player characters and narrative. Understanding the role of emotion in creating truly immersive and believable environments is critical for game designers. After discussing a taxonomy of emotion, this paper will present a systematic literature review of designing for emotion in computer games. Following this, a conceptual framework for affective design is offered as a guide for the future of computer game design.

affective computing; emotion; framework; computer games; game design

Penny de Byl

Faculty of Society and Design, Bond University, Australia

Professor Penny de Byl teaches and researches in Games Development and Interactive Multimedia at Bond University, Australia. Prior to this she taught serious games theory in Breda, The Netherlands and computer science at the University of Southern Queensland. In 2007 she won a national award for her work in Virtual Worlds and in 2008 a national teaching excellence and research fellow award. She has published widely in peer reviewed journals and is the author of four books on game development including the acclaimed “Holistic Game Development”. Penny’s areas of expertise include e-learning, serious games, augmented reality and affective computing.

Baillie, P. (2002). The synthesis of emotions in artificial intelligences (Doctoral dissertation). University of Southern Queensland.

Beatson, O. (2015). Csikszentmihalyi's flow model [SVG]. Wikipedia. Retrieved from http://en.wikipedia.org/wiki/Mihaly_Csikszentmihalyi

Beskow, J., & Nordenberg, M. (2005, January). Data-driven synthesis of expressive visual speech using an MPEG-4 talking head. Paper presented at INTERSPEECH, Lisbon. (pp. 793-796). Retrieved from www.speech.kth.se/prod/publications/files/1281.pdf

Brown, C., Yannakakis, G., & Colton, S. (2012) Guest editorial: Special issues on computational aesthetics in games. IEEE Transactions on Computational Intelligence and AI in Games, 4, 149-151. http://dx.doi.org/10.1109/TCIAIG.2012.2212900

Collins, K. (2009). An introduction to procedural music in video games. Contemporary Music Review, 28, 5-15. http://dx.doi.org/10.1080/07494460802663983

Collins, K., Kapralos, B., & Kanev, K. (2014). Smart table computer interaction interfaces with integrated sound. Retrieved from http://www.researchgate.net/profile/Karen_Collins6/ publication/260750965_Smart_Table_Computer_Interaction_Interfaces_with_Integrated_Sound/links/0c9 60537b45602df94000000.pdf

Crawford, C. (1984). The art of computer game design. Retrieved from http://www- rohan.sdsu.edu/~stewart/cs583/ACGD_ArtComputerGameDesign_ChrisCrawford_1982.pdf

Csikszentmihalyi, M. (1997). Flow and the psychology of discovery and invention. Harper Perennial, New York.

Cunningham, S., Grout, V., & Picking, R. (2010). Emotion, content, and context in sound and music. In M. Grimshaw (Ed.), Game sound technology and player interaction: Concepts and developments, (pp. 235– 263). http://dx.doi.org/10.4018/978-1-61692-828-5.ch012

Damasio, A. R. (1999). How the brain creates the mind. Scientific American-American Edition, 281, 112- 117 http://dx.doi.org/10.1038/scientificamerican1299-112

de Peuter, A. (2014). Development of an adaptive game by runtime adjustment of game parameters to EEG measurements. Retrieved from http://www.few.vu.nl/~apr350/bachelor_project/Scriptie_Auke_de_Peuter_2512078.pdf

Denton, D. A. (2006). The primordial emotions: The dawning of consciousness. Oxford University Press.

Desurvire, H., & Wiberg, C. (2009). Game usability heuristics (PLAY) for evaluating and designing better games: The next iteration. In A. Ozok & Zaphiris P. (Eds.), Online communities and social computing (pp. 557-566). Berlin Heidelberg: Springer.

Dickey, M. D. (2006). Game design narrative for learning: Appropriating adventure game design narrative devices and techniques for the design of interactive learning environments. Educational Technology Research and Development, 54, 245-263. http://dx.doi.org/10.1007/s11423-006-8806-y

Drossos, K., Floros, A., & Kanellopoulos, N. G. (2012). Affective acoustic ecology: Towards emotionally enhanced sound events. In A. Floros & I. Zannos (Eds.), Proceedings of the 7th Audio Mostly Conference: A Conference on Interaction with Sound (pp. 109-116), ACM, New York. Retrieved from http://dl.acm.org/citation.cfm?doid=2371456.2371474

Dupire, J., Gal, V., & Topol, A. (2009). Physiological player sensing: New interaction devices for video games. In S. Stevens & S. Saldamarco (Eds.), Entertainment computing - ICEC 2008 (pp. 203-208). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-540-89222-9_25

Fairclough, S. (2008). BCI and physiological computing for computer games: Differences, similarities & intuitive control. Proceedings of CHI’08. Retrieved from http://shfairclough.com/Stephen_Fairclough_Research/Publications_physiological_computing_mental_effo rt_stephen_fairclough_files/chi08_final.pdf

Fanselow, M. S. (1994). Neural organization of the defensive behaviour system responsible for fear. Psychonomic Bulletin Review, 1, 429-438. http://dx.doi.org/10.3758/BF03210947

Freeman, D. (2004). Creating emotion in games: The craft and art of emotioneering™. Computers in Entertainment (CIE), 2(3), article 15. http://dx.doi.org/10.1145/1027154.1027179

Garner, T., Grimshaw, M., & Nabi, D. A. (2010). A preliminary experiment to assess the fear value of preselected sound parameters in a survival horror game. Proceedings of the 5th Audio Mostly Conference: A Conference on Interaction with Sound. (pp. 10). ACM. http://dx.doi.org/10.1145/1859799.1859809

Garner, T., & Grimshaw, M. (2011, September). A climate of fear: Considerations for designing a virtual acoustic ecology of fear. In Proceedings of the 6th Audio Mostly Conference: A Conference on Interaction with Sound (pp. 31-38). ACM. http://dx.doi.org/10.1145/2095667.2095672

Gebhard, P., Schröder, M., Charfuelan, M., Endres, C., Kipp, M., Pammi, S., . . . Türk, O. (2008). IDEAS4Games: Building expressive virtual characters for computer games. In H. Prendinger, J. Lester & Ishizuka (Eds.), Intelligent virtual agents (pp. 426-440). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-54085483-8_43

Gemrot, J., Kadlec, R., Bída, M., Burkert, O., Píbil, R., Havlíček, J., . . . Brom, C. (2009). Pogamut 3 can assist developers in building AI (not only) for their videogame agents. In F. Dignum, J. Bradshaw, B. Silverman, & W. van Doesburg (Eds.), Agents for games and simulations (pp. 1-15). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-642-11198-3_1

Gilroy, S. W., Cavazza, M., & Benayoun, M. (2009). Using affective trajectories to describe states of flow in interactive art. Proceedings of the International Conference on Advances in Computer Entertainment Technology (pp. 165-172). http://dx.doi.org/10.1145/1690388.1690416

Hefner, D., Klimmt, C., & Vorderer, P. (2007). Identification with the player character as determinant of video game enjoyment. In L. Ma, M. Rauterberg, & R. Nakatsu (Eds.), Entertainment computing–ICEC 2007 (pp. 39-48). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-540-74873-1_6

Hoeberechts, M., & Shantz, J. (2009). Realtime emotional adaptation in automated composition. Proceedings of the 4th Audio Mostly Conference (pp. 1-8). Retrieved from http://mmm.csd.uwo.ca/People/gradstudents/jshantz4/pubs/hoeberechts_shantz_2009.pdf

Hubbard, J. A. (2001). Emotion expression processes in children's peer interaction: The role of peer rejection, aggression, and gender. Child Development, 72, 1426-1438. http://dx.doi.org/10.1111/1467- 8624.00357

Hudlicka, E. (2008). Affective computing for game design. In Proceedings of the 4th Intl. North American Conference on Intelligent Games and Simulation (GAMEON-NA) (pp. 5-12), Montreal, Canada.

Ip, B. (2011). Narrative structures in computer and video games. Part 1: Context, definitions, and initial findings. Games and Culture, 6, 103-134. http://dx.doi.org/10.1177/1555412010364982

Isbister, K., & DiMauro, C. (2011). Waggling the form baton: Analyzing body-movement-based design patterns in Nintendo Wii games, toward innovation of new possibilities for social and emotional experience. In D. England (Ed.), Whole body interaction (pp. 63-73). London: Springer. http://dx.doi.org/10.1007/978-0-85729-433-3_6

Jabareen, Y. R. (2009). Building a conceptual framework: Philosophy, definitions, and procedure. International Journal of Qualitative Methods, 8(4), 49-62. http://dx.doi.org/10.1007/978-0-85729-433-3

Johnson, D., & Wiles, J. (2003). Effective affective user interface design in games. Ergonomics, 46, 1332- 1345. http://dx.doi.org/10.1080/00140130310001610865

Koestler, A. (1967). The ghost in the machine. Hutchinson: London.

Kovács, G., Ruttkay, Z., & Fazekas, A. (2007). Virtual chess player with emotions. In Proceedings of the 4th Hungarian Conference on Computer Graphics and Geometry. Retrieved from http://www.inf.unideb.hu/~fattila/CV/Downloads/Proc33.pdf

Kromand, D. (2007). Avatar categorization. In Proceedings from DiGRA 2007: Situated Play (pp. 400- 406).

Larsen, R., & Diener, E. (1992). Promises and problems with the circumplex model of emotion. Emotion, 13, 25–59.

Laureano-Cruces, A. L., Acevedo-Moreno, D. A., Mora-Torres, M., & Ramírez-Rodríguez, J. (2012). A reactive behavior agent: Including emotions into a video game. Journal of Applied Research and Technology, 10, 651-672.

Lefton, L. A. (1994). Psychology (5th ed.), Allyn and Bacon: Boston.

Liljedahl, M. (2011). Sound for fantasy and freedom. In Grimshaw, M. (Ed.), Game sound technology and player interaction: Concepts and developments (pp. 22-44). IGI Global.

Livingstone, S. R., & Brown, A. R. (2005). Dynamic response: Real-time adaptation for music emotion. In Proceedings of the second Australasian conference on Interactive entertainment (pp. 105-111). Creativity & Cognition Studios Press.

Machine Elf 1735. (2011). Plutchik’s Wheel of Emotions [SVG]. Wikipedia. Retrieved from http://en.wikipedia.org/wiki/Contrasting_and_categorization_of_emotions#Plutchik.27s_wheel_of_emotio ns

MacLean, P. D. (1958). Contrasting functions of limbic and neocortical systems of the brain and their relevance to psychophysiological aspects of medicine. The American Journal of Medicine, 25, 611-626. http://dx.doi.org/10.1016/0002-9343(58)90050-0

Mansilla, W. A. (2006). Interactive dramaturgy by generating acousmêtre in a virtual environment. In R. Harper, M. Rauterberg, & M. Combetto (Ed.), Entertainment computing-ICEC 2006 (pp. 90-95). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/11872320_11

Mehrabian, A. (1995). Framework for a comprehensive description and measurement of emotional states. Genetic, social, and general psychology monographs, 121, 339-361.

Merckx, P. P. A. B., Truong, K. P., & Neerincx, M. A. (2007). Inducing and measuring emotion through a multiplayer first-person shooter computer game. In Proceedings of the Computer Games Workshop Amsterdam (pp. 7-6).

Miles, M. B., & Huberman, A. M. (1994) Qualitative data analysis: An expanded sourcebook. SAGE. Mori, M. (1970). The uncanny valley. Energy, 7(4), 33–35.

Murray, J. (2005). Did it make you cry? Creating dramatic agency in immersive environments. In G. Subsol (Ed.), Virtual storytelling. Using virtual reality technologies for storytelling (pp. 83-94). Springer Berlin Heidelberg.

Nacke, L. E., Grimshaw, M. N., & Lindley, C. A. (2010). More than a feeling: Measurement of sonic user experience and psychophysiology in a first-person shooter game. Interacting with Computers, 22, 336- 343 http://dx.doi.org/10.1016/j.intcom.2010.04.00

Ochs, M., Sabouret, N., & Corruble, V. (2008). Modeling the dynamics of non-player characters' social relations in video games. In Proceedings of the Fourth Artificial Intelligence and Interactive Digital Entertainment Conference. Retrieved from https://www.researchgate.net/publication/220978562_Modeling_the_Dynamics_of_Non- Player_Characters%27_Social_Relations_in_Video_Games

Ortony, A., Clore, G. L., & Collins, A. (1990). The cognitive structure of emotions. Cambridge university press.

Ortony, A., & Turner, T. J. (1990). What's basic about basic emotions? Psychological Review, 97, 315- 331 http://dx.doi.org/10.1037/0033-295X.97.3.315

Peña, L., Peña, J. M., & Ossowski, S. (2011). Representing emotion and mood states for virtual agents. In Klugl & S. Ossowski (Eds.), Multiagent system technologies (pp. 181-188). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-642-24603-6_19

Pert, C. B. (1997). Molecules of emotion: Why you feel the way you feel. Simon and Schuster. Picard, R. W. (1997). Affective computing. MIT press.

Plutchik, R. (1980). Emotion: A psychoevolutionary synthesis. Harper Collins College Division.

Popescu, A., Broekens, J., & van Someren, M. (2014). Gamygdala: An emotion engine for games. Affective Computing, IEEE Transactions on, 5, 32-44.

Przybylski, A. K., Weinstein, N., Murayama, K., Lynch, M. F., & Ryan, R. M. (2011). The ideal self at play the appeal of video games that let you be all you can be. Psychological Science, 23, 69-76. http://dx.doi.org/0956797611418676

Robison, J., McQuiggan, S., & Lester, J. (2009). Evaluating the consequences of affective feedback in intelligent tutoring systems. In Affective Computing and Intelligent Interaction and Workshops, 2009. ACII 2009. 3rd International Conference on (pp. 1-6). IEEE.

Rusch, D. C., & König, N. (2007). Barthes revisited: Perspectives on emotion strategies in computer games. Computer Philology Yearbook.

Russell, J. A. (2003). Core affect and the psychological construction of emotion. Psychological Review, 110, 145-172. http://dx.doi.org/10.1037/0033-295X.110.1.145

Sandercock, J., Padgham, L., & Zambetta, F. (2006). Creating adaptive and individual personalities in many characters without hand-crafting behaviors. In J. Gratch, M. Young, R. Aylett, D. Baillin, & P. Olivier (Eds.), Intelligent virtual agents (pp. 357-368). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/11821830_29

Scherer, K. R., & Ekman, P. (Eds.). (2014). Approaches to emotion. Psychology Press.

Schönbrodt, F. D., & Asendorpf, J. B. (2011). The challenge of constructing psychologically believable agents. Journal of Media Psychology: Theories, Methods, and Applications, 23, 100-107. http://dx.doi.org/10.1027/1864-1105/a000040

Shaver, P., Schwartz, J., Kirson, D., & O'connor, C. (1987). Emotion knowledge: Further exploration of a prototype approach. Journal of Personality and Social Psychology, 52, 1061-1086. http://dx.doi.org/10.1037/0022-3514.52.6.1061

Shilling, R., Zyda, M., & Wardynski, E. C. (2002, November). Introducing emotion into military simulation and video game design America's army: Operations and VIRTE. In Proceedings of GAME-ON 2002.

Shinkle, E. (2008). Video games, emotion and the six senses. Media, Culture, and Society, 30, 907-915. http://dx.doi.org/10.1177/0163443708096810

Silva, D. R., Siebra, C. A., Valadares, J. L., Almeida, A. L., Frery, A. C., da Rocha Falcão, J., & Ramalho, 116 L. (2000). A synthetic actor model for long-term computer games. Virtual Reality, 5, 107-116 http://dx.doi.org/10.1007/BF01424341

Skalski, P., & Whitbred, R. (2010). Image versus sound: A comparison of formal feature effects on presence and video game enjoyment. PsychNology Journal, 8, 67-84.

Smith, C. A., & Kirby, L. D. (2009). Putting appraisal in context: Toward a relational model of appraisal and emotion. Cognition and Emotion, 23, 1352-1372. http://dx.doi.org/10.1080/02699930902860386

Strauss, A. L. (1987). Qualitative analysis for social scientists. Cambridge University Press. Sweetser, P., & Wyeth, P. (2005). GameFlow: A model for evaluating player enjoyment in games. Computers in Entertainment (CIE), 3(3), article 3.

Takatalo, J., Häkkinen, J., Kaistinen, J., & Nyman, G. (2010). Presence, involvement, and flow in digital games. In R. Bernhaupt (Ed.), Evaluating user experience in games (pp. 23-46). London: Springer. http://dx.doi.org/10.1007/978-1-84882-963-3_3

Tinwell, A. (2009). Uncanny as usability obstacle. In A. Ozok & P. Zaphiris (Ed.), Online communities and social computing (pp. 622-631). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-642- 02774-1_67

Tinwell, A., Grimshaw, M., & Abdel-Nabi, D. (2011). Effect of emotion and articulation of speech on the uncanny valley in virtual characters. In S. D’Mello, A. Graesser, B. Schuller, & J. Martin (Eds.), Affective computing and intelligent interaction (pp. 557-566). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-642-24571-8_69

Tinwell, A., Grimshaw, M., Nabi, D. A., & Williams, A. (2011). Facial expression of emotion and perception of the uncanny valley in virtual characters. Computers in Human Behavior, 27, 741-749. http://dx.doi.org/10.1016/j.chb.2010.10.018

Tomlinson, B., & Blumberg, B. (2003). Alphawolf: Social learning, emotion and development in autonomous virtual agents. In W. Truszkowski, M. Hinchey, & C. Rouff (Eds.), Innovative concepts for agent-based systems (pp. 35-45). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-540- 45173-0_2

Truong, K. P., van Leeuwen, D. A., & Neerincx, M. A. (2007). Unobtrusive multimodal emotion detection in adaptive interfaces: Speech and facial expressions. In D. Schmorrow & L. Reeves (Eds.), Foundations of augmented cognition (pp. 354-363). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/978-3-540- 73216-7_40

van Tol, R., & Huiberts, S. (2008). IEZA: A framework for game audio. Retrieved from http://www.gamasutra.com/view/feature/131915/ieza_a_framework_for_game_audio.php

Weiner, B. (1986). An attributional theory of motivation and emotion. Springer Science & Business Media. Weiner, B. (1992). Human motivation: Metaphors, theories, and research. Sage.

Yun, C., Shastri, D., Pavlidis, I., & Deng, Z. (2009). O'game, can you feel my frustration? Improving user's gaming experience via stresscam. Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 2195-2204). Retrieved from www.cpl.uh.edu/publication_files/C49.pdf

Zagalo, N., Torres, A., & Branco, V. (2006). Passive interactivity, an answer to interactive emotion. In R. Harper, M. Rauterber, & M. Combetto (Eds.), Entertainment computing-ICEC 2006 (pp. 43-52). Berlin Heidelberg: Springer. http://dx.doi.org/10.1007/11872320_6

Zammitto, V., DiPaola, S., & Arya, A. (2008). A methodology for incorporating personality modeling in believable game characters. In Proceedings of Game Research and Development – Cybergames (pp. 24- 31).

Introduction

Affective computing (Picard, 1997) is the domain in which technology that can recognise, simulate and understand human emotion is studied and developed. The aim of the area is to design and create natural human-user interfaces - software that responds to the emotional needs of the user - and to provide a connective experience that bridges the gap between humans and technology. Within the field of computer games, affect is used in the development of believable characters (Baillie, 2002), to facilitate flow (Johnson & Wiles, 2003), enable haptic interfaces (Hudlicka, 2005), motivate learning (Robison, McQuiggan, & Lester, 2009), evoke compelling narrative (Rusch & König, 2007), provoke competition (Hubbard, 2001), and facilitate enjoyment (Sweetser & Wyeth, 2005). Freeman (2004) suggests there are over 1500 ways to evoke emotion in games. Critics of his work have described it as a presentation of bad screenwriting practices. However, eliciting emotion in computer games is a legitimate research and development field that goes far beyond the writing of narrative and draws on fields such as computer science, artificial intelligence, psychology and physiology.

Today’s computer games are very different from the originals. Interaction was achieved using a simple joystick, mouse or keyboard. Games are now capable of more advanced human-computer interactions; interfaces that cater to almost all human senses. With emotion being defined as a subjective response, accompanied by a physiological change that affects behaviour and decision making (Koestler, 1967; Lefton, 1994), it would seem the domain of computer games, given the array of accompanying technology, is ripe for the introduction of emotions as a form of game interactivity. However, the process is not as simple as testing skin conductance and determining a player’s deep emotional, psychological state. Such practice would require emotion recognition and generation to be embedded within the design process. It would also require a thorough understanding of the role of emotions in games, knowledge of emotion research in psychology and neuroscience, comprehension of emotion sensing and recognition software, and technology and appreciation for affect expression in game characters and mastery of cognitive models of affect.

Preliminary examinations of the literature have lead to the hypothesis that the concept of emotion, although identified as a key design consideration in computer games, is dealt with in a haphazard and superficial way through poor definition. Furthermore, affect is considered under a holistic umbrella rather than a multilayered and complex psychological phenomena. Today the literature consists of papers focusing on eliciting emotional responses from narrative and creating emotionally believable non-player characters. However the role of emotions in computer games extends far beyond these limited approaches, with emotion being the primal seat of motivation and behaviour in humans.

In order to provide an overview of the current use of emotion in game design, this research examines the questions:

What is the current breadth and depth of the use of emotions in computer games design? and, How should human emotions be designed for in computer games?

The results will be used to develop a framework that elucidates the potential of emotions as an integral part of computer game interaction and provides a roadmap for the design of affective computer games elucidating key areas and revealing those that require extra research. Furthermore, it will provide a formal approach to understanding the role emotions play in games at all levels and bridge the gap between game design, development and technology. The framework presented will act as a tool to assist designers, developers, researchers and academics to embed affective constructs within new games and understand those employed in existing ones.

Background

Freeman (2004) provides a superficial argument for building emotion into games. He suggests "putting emotions in games” (p. 1) will (1) expand the games audience demographic, (2) lead to increased social hype in the marketplace, (3) gain more airtime in the press, (4) seem less amateurish, (5) inspire the creative team, (6) evoke consumer loyalty, (7) lead to greater profits, (8) provide a competitive advantage, and, (9) place the game ahead of the competition. The process he coined emotioneering includes 32 techniques for injecting emotion into computer games. The majority of these methods focus on narrative and deepening relationships with non-player characters. The folk wisdom that strong narrative, emotional involvement should make one cry (Murray, 2005) suggests the affective measure of a game be tied solely to sadness and associated emotional states. However, the domain of human emotion is vast and multilayered in such a way that it cannot be touched by narrative alone.

Emotion presents itself as a non-concise term in many of the domains that boast an understanding of the topic (Baillie, 2002). These range from neurology (Pert, 1997) and psychology (Smith & Kirby, 2009) to artificial intelligence (Picard, 1997). The reason may be that the term is used to describe a large range of cognitive and physiological states in humans. Emotions are often referred to in the broad sense, to describe not only familiar feelings such as happiness and sadness, but also biological motivational urges such as hunger and thirst (Pert, 1997). Koestler (1967) summed up this general view defining emotions as mental states of a widespread nature accompanying intense feelings and bodily changes. The degree of difficulty plaguing this subject matter is that a person rarely experiences a pure emotion (Koestler, 1967). For example, feelings of hunger may be accompanied by feelings of frustration. However, there is a logical, intuitive difference in defining hunger as an emotion and frustration as an emotion. Another general definition of emotion given by Lefton (1994) defines emotion as a subjective response accompanied by a physiological change that is cognitively processed by the individual resulting in a change in behaviour.

When it comes to understanding emotion, there are two schools of scientific enquiry. The first approaches the physiological nature of the affect and how it is generated and dealt with at the biological/chemical level. MacLean (1958), one of the pioneers of physiological-emotion theory, explains human emotions as the result of the interaction of three very differently structured brain types; the reptilian brain, the paleo- mammalian brain and the neo-mammalian brain. His Three Brain Theory suggests the human brain has inherited the structures of these three brains as the result of the evolutionary process. The reptilian brain corresponds to the basic structure in the phylo-genetically oldest parts of the human brain such as the medulla, hypothalamus, the reticular system and the basal ganglia. This area is said to be responsible for visceral and glandular regulations, primitive activities, reflexes, arousal and sleep.

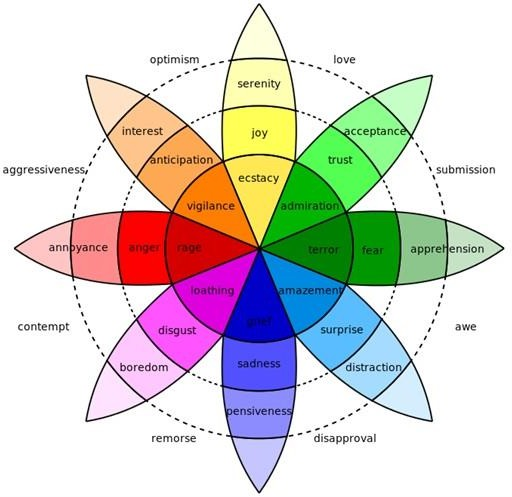

The second school of enquiry examines the way in which the brain interprets emotional states from physiological actions. It is the path taken by psychology, and encompasses the cognitive theories of emotion. This standpoint on emotion generation, otherwise known as appraisal theory and considered by a number of psychologists, explains the generation of emotions using distinct evaluations of situations to categorise arising affective states. These appraisal theories involves grouping emotional states as positive or negative and continues to decompose these into sub-states according to other discrete characteristics such as expected/unexpected, certain/uncertain and high control/low control. Shaver, Schwartz, Kirson, and O’Connor (1987) identify six atomic emotional states: love, joy, anger, sadness, fear and surprise. Although they believe that emotional experience is very distinct in different cultural situations, these six basic emotions or a subset thereof are universal throughout the theories of emotion (Ortony & Turner, 1990; Plutchik 1980; Smith & Kirby, 2000). The overlapping of these emotions can produce all other emotional states. Such emotion blending, given a set of elementary emotions, is an idea also shared by Plutchik (1980) as shown by Plutchik’s Wheel of Emotion, illustrated in Figure 1.

Figure 1. Plutchik’s Wheel of Emotions (Machine Elf 1735, 2011).

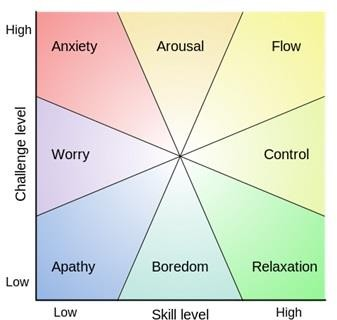

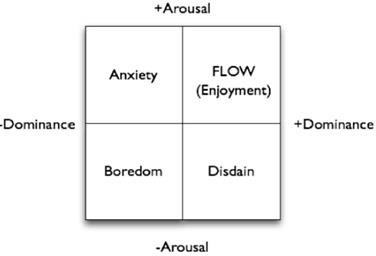

With respect to computer games, emotions form the mechanism by which a player becomes immersed in the medium; demonstrating a larger emotional investment in the game than any other medium. Crawford (1984) likens computer games to art forms that “present [their] audience with fantasy experiences that simulate emotion” (p. 3). This viewpoint clearly makes the link between emotion and a state of game immersion. Total immersion is likened to the mental state of flow (Csikszentmihalyi, 1997) as illustrated in Figure 2, in which a player is fully immersed in an activity through feelings of focus, involvement and enjoyment.

Figure 2. Csikszentmihalyi ‘s Flow Model (Beatson, 2015).

Unlike the literature from psychology and physiology, within the computer games scholarship umbrella use of the terms emotion and feeling is common. This leads to inadequate analysis and hence understanding of the phenomena that is attributed as being a critical component in connecting the player with the gaming medium. In this study, the superficial level of the investigation of emotion in respect to designing computer games will be revealed and the consequences discussed. To provide a building block for the conceptual framework emotion in game design, a taxonomy of emotion will be elucidated, and grouped into categories which will be used as a basis for the final framework, and to extract themes from the literature surveyed in the methodology. The five categories selected are common across the majority of cognitive models presented herein and based on Picard’s (1997) five components necessary for developing an affective system.

A Taxonomy of Emotion

Given the plethora of definitions, types and causes of emotion, a taxonomy of emotion can be extracted showing categories based on generation and effect. These categories are levelled according to MacLean’s (1958) theories that emotions have evolved with each new layer added to the human brain from the original reptilian fast reacting brain, through to the civilised neo-mammalian. While emotion generation and responses cannot be physiologically linked by scientific investigation to MacLean’s layers of the brain they do provide a conceptual structure for ordering the types of emotion.

Mind-Body emotions. Produced by homeostatic mechanisms, these emotions drive human behaviour that is governed by viscerogenic and bodily needs (Weiner, 1992). Examples include hunger for food and the desire for sleep. These primordial emotions have emerged as consciousness during the evolution process, to address the basic survival needs and thus act as physiological motivators to address those needs (Denton, 2006).

Fast primary emotions. Reactions and behaviours generated by the archicortex and mesocortex are otherwise known as fast primary emotions and can be likened to the stimulus response experiments of the behavioural psychologists (Koestler, 1967). These emotions refer to the quick acting emotions that serve as the human’s initial response to a stimulus and act as a survival mechanism. They include effects such as fear and startledness.

Emotional Experience. Otherwise referred to as core affect (Russell, 2003), emotional experiences are a continued mental state. They are always present, as one’s body temperature, and encompass an individual’s mood. Examples include gloomy, tense and calm. The idea of a core affect aligns with Damasio’s (1999) view of emotion, in general, being a background state of the body.

Cognitive Appraisals. Unlike background emotion that endures, an appraisal is one that results after some activity or encounter. These are best explained through the lens of cognitive appraisal where the valence (pleasure/displeasure) and arousal (level of activation) are considered in assessing an emotional state. One such model of cognitive appraisal is the OCC model (Ortony, Clore, & Collins, 1990) in which events are appraised in order to determine an appropriate emotional response. Table 1 illustrates 8 of the possible 22 emotional states from the OCC model.

Table 1. A subset of the OCC Cognitive Structure of Emotion.

|

Consequential Disposition |

Focus Object |

Consequences for Focus Object |

Resulting Emotion |

|

Pleased |

Other |

Desirable |

Happy for |

|

Pleased |

Other |

Undesirable |

Pity |

|

Displeased |

Other |

Desirable |

Resentment |

|

Displeased |

Other |

Undesirable |

Gloating |

|

Pleased |

Self |

Relevant |

Hope |

|

Pleased |

Self |

Irrelevant |

Joy |

|

Displeased |

Self |

Relevant |

Fear |

|

Displeased |

Self |

Irrelevant |

Distress |

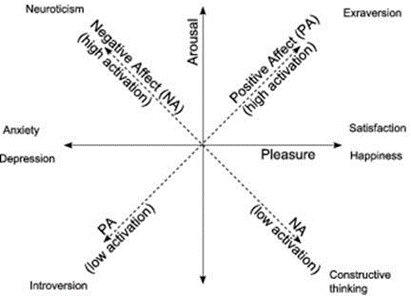

Another well cited appraisal model is Mehrabian’s Pleasure-Arousal-Dominance model (1995), illustrated in Figure 3. The model presents a range of emotional responses as assessed by the three dimensions, after which it is named.

Figure 3. Mehrabian’s Pleasure-Arousal-Dominance (PAD) model of emotions (Larsen & Diener, 1992).

Emotional Behaviour. All emotion activates a level of motivation. Whether that motivation leads to action depends on strength of the valence and type of arousal. This category does not stand-alone but rather cuts through all the before mentioned. If a person is hungry enough they will eat, if they are frightened enough they will flee and if they are very happy for another they will celebrate. Predicting a person’s actions knowing the type of arousal and its valance becomes more difficult with each higher level (category) of emotion. Determining a starving man will eat when presented with food, or a woman will jump out of the way of an oncoming vehicle, is very different from foreseeing a boy’s actions toward his sister because he resents her getting the bigger bedroom. Weiner’s (1986) attribution theory of motivation and emotion suggests behavioural consequences of an emotional reaction integrate valance and arousal with background emotions, personal beliefs, personal goals, and rational assessment of forthcoming behavioural consequences.

As these categories illustrate, emotion is a multidimensional and multifaceted phenomena. Its application to computer games scholarship and design has far reaching implications. How it is currently perceived and used in computer games scholarship is the focus of the following study, aimed at extracting the key categories of computer game design in which emotion plays the biggest part.

Methodology

A conceptual framework (Miles & Huberman, 1994) is ideal for examining emotion in computer games as it presents the key factors, constructs and variables and identifies the associated relationships in a given area of interest. The qualitative method for building conceptual frameworks (Jabareen, 2009) based on grounded theory (Strauss, 1987), was used in this study as it was necessary not only to collect concepts from existing literature, but to examine the integral role each plays in the final affective framework. Grounded theory is sufficient for developing such a framework due to a paradigm of inquiry that reveals numerous distinct features through coding methods.

Data Collection

A literature search covering 1995 to 2015 was carried out via Google Scholar to locate published research papers relevant to this study. A preliminary search using terms including emotion, affect, computer games and video games identified relevant search criteria. Three thorough searches were then performed with:

(emotion) AND (computer games OR video games OR videogames)

(emotion AND framework) AND (computer games OR video games OR videogames)

(emotion AND design) AND (computer games OR video games OR videogames)

Each search returned a Google Scholar maximum of 3000 papers. When duplicates were removed 1007 remained. To narrow down the selection of papers for inclusion in this study, the collection was searched for the terms design and framework, plus specific emotions and citations of prominent affect researchers.

The specific emotions were from Ortony & Turner’s (1990) extensive list of basic emotions and included acceptance, anger, anticipation, anxiety, aversion, contempt, courage, dejection, desire, despair, disgust, distress, elation, expectancy, fear, fear guilt, grief, happiness, hate, hope, interest, joy, love, pain, panic, pleasure, rage, sadness, shame, sorrow, subjection, surprise, tender-emotion, terror and wonder. Addition emotional terms including instinct, urge, drive, pleasant, unpleasant, valance and arousal were used to widen the search to pick up research addressing other areas of the taxonomy before mentioned.

The collection of papers was narrowed down further by focusing on papers which (a) included empirical evidence relating to the use of emotion in computer games, (b) focused on the use of emotion as an integral mechanic in game design, and (c) were published in refereed journals or conference proceedings. In addition, papers that examined why people play games, the application of computer games (e.g. in learning or therapy) and the psychological effects of player (e.g. violent tendencies) were excluded, as they did not focus on game design. Using these criteria 52 papers were selected for inclusion in the study. These papers are listed in the Appendix.

Coding

Categorisations to be used for coding reached saturation point after the first 40 papers. The codes extracted were obtained by systematically scrutinising each paper for common phrases and terminology within the search domain. These categories were used to code the papers in terms of the main aim of the study, the type of research and the specific use of emotions in the game design. The type of research was coded according to whether it was a randomised control trial (RCT), a quasi-experimental design, a survey, qualitative, analytical, a comparative analysis or descriptive. The uses of emotions in game design were coded according to their functional use; narrative, interface, the player’s avatar or non-player characters (NPCs).

Results and Discussion

Main Literature Search and Paper Selection

From the initial 1007 documents that met the search criteria, the majority were speculative, literature reviews, critical analyses of existing games, examinations of the impact and use of computer games, mentioned computer games in passing or were unpublished. Fifty-two papers met the inclusion criteria and discussed the use of emotion in the design of differing aspects of computer games. The content was diverse in scope and drew knowledge from humanities, psychology, game studies and computer science. To find the categorical dimension of themes a narrative synthesis approach was implemented.

Categorisation of Papers. Examination of the content of the papers revealed four distinct focal categories in game design were being targeted for embedding emotional constructs. Manual coding of the first 40 papers revealed four key themes. These categories of interface, NPC, avatar and narrative, discussed later, are shown in Table 2 along with the number of papers in each area.

Table 2. Number of papers focusing on types of emotion use in game design by research design.

|

Research Design |

||||||||

|

Use of Emotion |

Analytical |

Comparative |

Descriptive |

Qual |

Quasi |

RCT |

Survey |

Total |

|

INTERFACE |

3 |

1 |

8 |

0 |

7 |

2 |

2 |

23 |

|

NPC |

8 |

0 |

7 |

1 |

2 |

1 |

1 |

20 |

|

AVATAR |

0 |

0 |

1 |

1 |

0 |

2 |

0 |

4 |

|

NARRATIVE |

0 |

1 |

3 |

0 |

0 |

1 |

0 |

5 |

|

Total |

11 |

2 |

19 |

2 |

9 |

6 |

3 |

52 |

The diversity of the number of studies and research designs employed within these areas will now be presented and explored.

Research Designs. Table 2 reveals the differing types of research methodologies employed in the 52 selected papers. Both social science and computer science method are considered. At the beginning of the study only social science methods were being considered, however, with the number of papers stemming from the computer science domain inclusion of extra categories became essential as not to risk counting such papers in an inappropriate category. Overall, descriptive studies (19) were the most frequently used followed by analytical (11), quasi-experimental (9), RCT (6), survey (3), and comparative (2).

In computer science, descriptive research takes place during the early understandings of a system. It is applied in areas, for example, where observations about human-machine interactions take place. As the use of emotion in games as a design construct is in its infancy and not fully understood, it is no surprise the majority of papers investigated focused on this stage.

Analytical research occurs in computer science during system design. It involves the examination of causal relationship between interconnected parts of a system as well as devising hybrid approaches to bring seemingly disparate mechanisms together. It therefore makes sense it is the second most applied methodology in a domain aimed at recognition and synthesis of human emotions by machines. The results also reveal that more research studies taking place in the domain of emotions in computer games are occurring in software engineering and computer science disciplines, than in social sciences.

With respect to the number of studies focussed on the specific emotional use categories, the number of interface and NPC papers, greatly outnumber those on avatars and narrative. Avatars are driven by the player and would seem to require very little programming other than to include appropriate animations. Designing emotion recognition or synthesis in such a construct could be seen as futile as the game designer relies on the player’s own emotions to drive the avatar. The avatar is merely a puppet on the end of the player’s controller. Designing emotion into narrative may also seem futile as narratology, even before games, sought to evoke emotion in the audience. The lack of papers concentrated in this area could reveal game designers believe all that can be done with respect to understanding and embedding emotion in narrative has been achieved throughout the long history of storytelling.

In contrast, interface and NPC design are as new as the medium of computer games. Designing for them must change with technology. As more powerful computing processors become available, the way in which a player interacts with a game is evolving rapidly. Where once a player controlled a rudimentary dot on the screen using a keyboard or simple joystick, they can now provide voice, gestures and biometrics as input. Output has also advanced from a black and white cathode-ray tube screen to augmented reality and simulated 3D with headsets and specialised glasses.

NPC design has evolved with the field of artificial intelligence, computer graphics and animation. As computers have increased in their processing and display abilities the techniques employed to generate adaptive and believable behaviour and photo realistic visuals designers have been less restricted than they were in the past with the life-like qualities they could impart in these virtual game characters.

Emotion Design Research categories

The focus of research within these four categories will now be examined.

Narrative. Five papers in the current review addressed designing for emotions in computer game narrative. Ip (2011) overviews stories, plots and narratives and examines the techniques of the hero’s journey, the three-act structure, and character archetypes including discussion on the portrayal of human emotions. He suggests that “emotions play a vital role in the development of more convincing and richer narratives” (p. 110), and that insight into human emotion and behaviour applicable to game environments can be found in the categorisation of the basic and mixed emotions by Plutchik (1980).

Brown, Yannakakis, & Colton (2012) presented emotion in game narrative as one of three main areas of computational aesthetics whose aim is “to enhance the richness of the playing experience, and afford sensual, visceral, and/or intellectual stimulation to the player” (p. 149). They suggest capturing and reflecting the player’s emotional state in the game environment through music, visuals and adaptive stories can improve game aesthetics. Mansilla (2006) also suggests narrative can be deepened using game environment aesthetics focusing on the use of off-screen sounds, known as acousmetre, to stimulate the player’s imagination and extend the game environment beyond the confines of the screen. The researcher found through experimenting with acousmetre in a game environment that suspense can be evoked and that the increase in occurrence of these sounds correlated with participants’ corresponding emotional state.

Another paper focuses on the evoking of a single emotional state; sadness. Zagalo, Torres, & Branco (2006) argue that sadness is easier to induce in cinematic viewers, than interactive games, as it is a negative emotion with a deactivated physiological and behavioural state and therefore lends itself to a passive audience. Their solution, which they call passive interactivity, consists of three phases: attachment, rupture and passivity. During the attachment phase the player is encouraged to form a bond with an in-game character. After a suitable amount of time, this bond is ruptured; the character dies or the player is separated from them indefinitely. The rupture elicits sadness, however it is short-lived. They suggest this state can be extended by providing lower activity actions resulting in reattachment or the creation of new attachments.

Interface. From the papers considered in this review, three clear themes emerged around the use and importance of emotions in player-computer game interfaces. These included sound, devices and frameworks. Sound in interactive media augments the storyline, increases engagement and provides information (Collins, Kapralos, & Kanev, 2014). As Cunningham, Grout, and Picking (2010) discuss, sound in games occurs on two levels: diegetic (sound whose source is visible on the screen such as the voices of characters), and non-diegetic (sound whose source is not visible or considered part of the game action such as narrator’s commentary or mood music). Van Tol and Huiberts (2008) extend this idea, presenting game audio as a two dimensional space with diegetic/non-diegetic on one axis, and setting (where the game takes place)/activity (what the player is doing) on the other. Although Garner, Grimshaw, and Nabi (2010) found that players don’t place any importance on sound as a feature in games Nacke, Grimshaw, and Lindley (2010) found they notice if it is not there. Furthermore, the absence of sound had a detrimental effect on player engagement and immersion.

Sound compliments the other senses. Shilling, Zyda, and Wardynski (2002) investigated the use of sound in America’s Army to convey the feeling and emotion of handling a weapon in lieu of being able to hold it.

Liljedahl (2011) insist it is an essential feature that should be considered in game design in parallel with graphics to create “unique, immersive and rewarding gaming experiences” (p. 40).

A number of researchers have recognised the need to design and develop adaptive and procedural music that reflects the current ambience, mood and player’s emotional state (Drossos, Floros, & Kanellopoulos, 2012; Hoeberechts & Shantz , 2009; Livingstone & Brown, 2005). Collins (2009) discusses the benefits of procedurally generated music as an alternative to pre-recorded tracks as the computer creates the game audio in real-time and hence can reduce production times and game file sizes. However she is quick to point out that computer generated music is not at the point where it can immerse players in the game through emotional induction as traditional music.

Of the papers investigated, fear was the only emotion singled out for acoustic design consideration. Garner and Grimshaw (2011) present the induction of fear in the player and present ideas for reliable manipulation of the fear experience. They discuss Fanselow’s (1994) three stages of fear induction in a game audio design context to include caution, terror and horror in which the predecessing stage primes the player’s emotions for the next. At each point, the researchers suggest playing sounds that reflect a “horrifying sensation” (p. 7) such as gasping or screaming. In previous work they examined the significance of the sound parameters of pitch, alteration and decibel level to inducing fear in games but found no conclusive evidence to say they had an effect on the participants of their study Garner et al. (2010).

The second grouping of papers in this section examined human-computer interaction with emphasis on peripheral devices. Dupire, Gal, and Topol (2009) examined the use of an electrode covered T-shirt to gather player biometrics and convert them into emotional states for recognition and processing. Fairclough (2008) discussed how brain-computer interfaces, such as electroencephalogram (EEG) apparatus, could be used to enhance computer game interaction by providing an intuitive control of a player’s character as well as providing emotional state information to the game for which it could adapt. De Peuter (2014) also examined the use of EEG measurements for adaptive gameplay. He used the readings to change the game environment’s lighting to calm a stressed player or stress a calm player in a horror game scenario.

Truong, van Leeuwan and Nerrincx (2007) examined the less intrusive methods of emotional state recognition as game input from speech and facial expressions and Yun, Shastri, Pavlidis, and Deng (2009) investigated reading player stress levels via a webcam. In both instances, the researchers were interested in capturing the player’s state in order to provide a natural human-computer interface for gameplay.

In contrast to emotion detection by a game, Isbister and DiMauro (2011) investigated the emotion evoking capabilities of the Nintendo Wii suggesting full body gaming involvement lead to positive emotional experiences. In their investigation of available games that use the wiimote, they found little designed to incite emotion through movement mechanics. Shinkle (2008) also examines the potential of game controllers to facilitate emotional expression proposing that a player’s physical presence, their actions and gestures at the interface level produce involved emotional gameplay.

In the interface category there were a number of papers that presented original frameworks for using emotion to enhance game playability and game engagement. Takatalo, Häkkinen, Kaistinen, and Nyman (2010) described a Presence, Involvement and Flow Framework (PIFF) that addresses emotion as necessary for all three elements. Also, specifically addressing flow, Gilroy, Cavazza, and Benayoun (2009) describe their framework that dispenses with Csikszaentmihalyi’s (1997) original model replacing challenge and skill with factors PAD as illustrated in Figure 4.

To facilitate game development early in the process Desurvire and Wiberg (2009) present a list of heuristics inspired by formal human-computer interaction (HCI) design principles. In their framework, they allow for emotional connection citing the importance of recognising that the player has emotional ties to the game world as well as their avatar.

Last, in the papers discussing game interfaces, Merkx, Truong, and Neerincx (2007) reminded game designers through their empirical study that player’s self-reported valence is more positive when a player believes their opponent is another human. A fact never ignored by those working on creating believable avatars and NPCs.

Figure 4. Affective mapping of flow channels (Gilroy, Cavazza, & Benayoun, 2009, p. 6).

Avatars. Kromand (2007) identifies two types of avatar: central and acentral. A central avatar acts as a shell the player steps into when they play and it becomes their embodiment in the virtual game environment. For example, in Splinter Cell, the player becomes Sam Fisher, the main character. An acentral avatar however does not become the player’s virtual body. Rather the player plays with the avatar (and maybe more than one at a time) like dolls. This is best illustrated in The Sims. Unlike central avatars that provide a high level of emotional proximity (Dickey, 2006) where the player identifies with their avatar as another version of themselves, acentral avatars require a build-up of sympathy through which the player connects.

Przybylski, Weinstein, Murayama, Lynch, and Ryan (2011) found that computer games in which the player’s identified with their avatars as their ideal-selves had the greatest influence on evoking player emotions. In addition, they also discovered that high levels of difference between a player’s actual-self and their ideal-self magnified this effect. Hefner, Klimmt, and Vorderer (2007) reported player’s also adopted a different self-concept during gameplay in which they would become “more courageous, heroic and powerful” (p. 1), and player-character identification correlates with game enjoyment.

Research into emotional design in computer game avatars featured in the smallest grouping of papers (4) in this review. This could be because researchers believe players will bring their own human emotions to the character. However, in the case of acentral avatars, such as The Sims, the characters when not being controlled by the player are autonomous and in need to processing and expressing emotion. Much of this behaviour is addressed in the following NPC section.

NPCs. Many of the papers reviewed presented frameworks for designing and developing believable NPCs. The majority of these based their models on the OCC appraisal theory. Popescu, Broekens, and van Someren (2014) presented their emotion engine for games (GAMYGDALA). They implemented a version of OCC that translates emotional responses into the PAD format of emotional intensities and degree of dominance. The engine takes a game event as input and outputs an emotional state. The Gemrot et al. (2009) ALMA project is also based on OCC and generates short-term affects, medium term moods and long-term personality traits for use in game characters. The researchers’ goal is to create a character whose behaviour is consistent and believable for long-term interactions with a player. This is also the objective for Silva et al. (2000) who present a framework for long-term personality cohesion using OCC. Other research projects in the same vein include Laureano-Cruces, Acevedo-Moreno, Mora-Torres, and Ramirez-Rodriguez (2012) reactive agent that can reorder its goals based on its priorities and current emotional state and Peña, Peña, and Ossowski’s (2011) Mood Vector Space, that combines OCC and PAD to enable mathematical manipulation of emotion constructs. Sandercock, Padgham, and Zambetta (2006) also use OCC as their cognitive appraisal generator but introduces the concept of coping. While the coping mechanism is inherent in the design of the before mentioned it is highlighted in this case. Their NPC’s with adaptive personalities express affect based on an emotional states rate of uptake, rate of decay and thresholds for expression and coping. The coping mechanism forces the NPC to act in order to equalise an unpleasant dominating emotional state.

Another theme highlighted in this group of papers was the need to consider how social relationships impact on emotion. Ochs, Sabouret, and Corruble’s (2008) model for NPC behaviour focuses on the dynamics of social relationships and includes data about the NPC’s emotional state, player’s emotional state and the apparent relationship that may exist between the two. Tomlinson and Blumberg (2003), in addition to considering social behaviour, include the concept of emotional memories in their virtual wolf- like characters. When calculating the next emotional state their NPCs have the ability to express emotion and remember its association with past events and environmental stimuli. Schönbrodt and Asendorpf (2011) consider social relationships as an important construct in the simulated psychology of an NPC. Unlike the before mentioned models however they shy away from using OCC in favour of the Zurich Model of Social Interaction which they claim serves as an “integrative and rather exhaustive model” (Schönbrodt & Asendorpf, 2011; p. 18), and uses emotion as an internal process to trigger coping strategies.

Sitting on the other side of the NPC development coin from internal processing is the visual aspect. For a game character to be considered truly believable it must not only act like an intelligence, emotional creature, but also look like one. A number of papers in this review examined the physical representation of emotions in a character with particular emphasis on facial expression such as Zammitto, DiPaola, and Arya’s (2008) IFACE facial animation system. IFACE processes a character’s geometry, knowledge, personality and mood to generate a facial expression based on a set of basic emotions. The work of Beskow and Nordenberg (2005), Kovács, Ruttkay, and Fazekas (2007), and Gebhard et al. (2008), consider similar functionality but also add expressive speech. In all works the researchers merge facial expressions with lip-synching techniques to provide additional motive to character dialogue. Gebhard et al. (2008) take their work one step further and also implement full body expression.

A concept related to the outward appearance of a virtual character is the uncanny valley phenomenon (Mori, 1970). This effect is the low point in negative perception of an artificial being. It provokes an uneasy feeling in an observer that something is not quite human about characters designed for photorealism. Mori explains the closer a designer try to make a character look like the real thing, the more it will remind the viewer that it is not real. Tinwell, Grimshaw, and Abdel-Nabi (2011) found the uncanny effect was controllable through the animation of mouth movements. Exaggerated movements reduced the uncanny effect on faces displaying anger while it increased in faces expressing happiness. Tinwell (2009) also found that game characters did not need to be photo-realistic to be accepted as real by players. Furthermore, Tinwell, Grimshaw, Nabi, and Williams (2011) revealed that the uncanny valley is more prevalent in emotional expressions when animation in the upper face is limited.

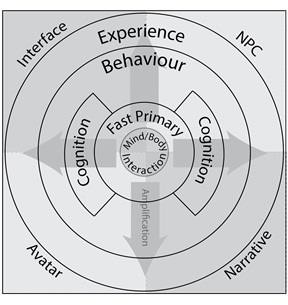

A Conceptual Affective Design Framework

This framework draws inspiration from the before outlined taxonomy of emotion and the papers reviewed in the previous section. It presents a holistic view of game design with respect to enabling emotional mechanisms in each of the four areas of interface, NPC, avatars and narrative. As Picard (1997) suggests, there are five components necessary in developing an affective computing system. These include emotional behaviour, fast primary emotions, cognitive appraisals, emotional experience and mind-body interfaces. The proposed framework positions these as a layered emotional system in which the core is amplified to by subsequent outer layers as illustrated in Figure 5. The idea of affect amplifying human drives comes from the work of Scherer and Ekman (2014) who suggest emotional experience occurs in emotional subsystems, and influences other subsystems with respect to subjective experiences.

Each emotional layer has design implications for each of the four mechanisms. Many of these have been addressed in the papers reviewed herein with each considering a smaller part of the bigger picture. By considering the dimensions of the conceptual framework and the body of research work examined a gap in computer game design methodology with respect to integrating emotions emerges.

Mind-Body Interaction

Designing for a mind-body interface requires the system to recognise the emotions of the player and translate them for the game to use. Interface options occur across a continuum of methods from the player telling the system how they feel to the system reading the player’s emotional state using biometric readings. As emotional-proximity between player and their avatar correlates with game enjoyment, the central avatar should reflect the player’s emotional state. Research is yet to examine portraying the player’s emotional state on the avatar by way of facial expressions or gestures. If the avatar were seen to been in the same emotional state as the player it may increase emotional-proximity and hence intensify immersion. The player’s emotions can also be used by a game’s NPCs to react to the player, as would another human given that players tend to be more engaged when they believe they are playing against another human.

Figure 5. A conceptual affective design framework.

Gesture-based games inherently bring mind-body interaction into the narrative whereas in games that interface via a traditional controller or keyboard, the player’s body is less involved. These types of games still instigate player body movements not necessary to game play such as stillness to facilitate focus and movements that support the control of the game such as the player leaning over when turning the corner in a racing game.

Fast Primary Emotions

Fast primary emotions cause the player to react without thinking. This is illustrated in the framework in Figure 5 where there is direct contact between the fast primary and behaviour layers. To force the player to taking an action that can later be exploited, the game designer can abuse the player’s instinct and force them to act. Such instinctual emotions can be controlled via the interface. For example, panting, screaming and other horrifying sounds will heighten the player’s level of fear (Garner & Grimshaw, 2011). With respect to the player’s central avatar, fast primary emotions are portrayed through the player’s control, although the avatar can communicate diegetic information such as their state of health by visual means. The player’s emotional proximity to their virtual embodiment intensifies their need to act to save themselves. The same emotions can be designed for in NPCs by programming in simple flight or fight like behaviour. In narrative, fast primary emotions can be evoked through the inclusion of unexpected events in the story; a building suddenly blowing up, a plot-line twist that releases a mass of unforeseen enemies or the floor suddenly falling from beneath the player’s character. Because of the instinctual human reaction to such events the designer can predict with great accuracy what the player will do and then build in later narrative that adds to the player’s angst or resolve their problems.

Cognitive Appraisals

The majority of the research presented in this review addresses the issue of cognitive appraisals of emotion. This is due to their analytical and computational nature that can be exploited by computer programs. The highly cited OCC and PAD being the inspiration for many emotion systems embedded in games. In this framework, cognitive appraisals process emotions with more assessment than fast primary ones. The game interface can facilitate the player’s cognitive appraisals by providing clear information about the state of their avatar, other characters, goals that need to be achieved and the status of the game environment. For the acentral avatar of the sim-like doll as it must be capable of processing its own emotional state using cognitive appraisals when not under the control of the player. This is also true of the NPC as demonstrated by this highly examined area in the review though much of the work was repetitive, superficial and descriptive without further implementation and empirical trials to validate the frameworks. In narrative, cognitive appraisals are important in establishing the player’s goals and motivations stemming from the background story of the character they play in the game. Designers must be conscious of revealing the characters personality clearly such that the player can take on the role and proceed with the game.

Emotional Behaviour

A computer game by its very interactive nature elicits player behaviour and this behaviour is inherently emotional given the human decision-making process and the importance affect plays. The interface can aid emotional behaviour by providing a seamless mechanism for the player to interact with the game environment. Hard to find (or remember) controls or convoluted interface designs will affect suspension of disbelief and in turn emotional-proximity. Feedback from the game is also key to facilitating the player’s experience. It is less important for a central avatar in which the characters is almost always under the players control, although examples exist where an avatar has refused a player’s command. Such behaviour is more common in acentral avatars where a character simply refuses to perform the desired action because they aren’t in the right mood (e.g. The Sims). This also extends to NPCs whose programs determine their emotional state to calculate their next behaviour. The emotional of an NPC state can be expressed in a facial expression, body movement and speech. Furthermore, the emotional behaviour of the player can be monitored with respect to the narrative to encourage the player from one emotional state and adapting the plotline as necessary. For example, if a player fails at a task they are trying to perform several times in a row, the game system could present them with a hint or the surprise arrival of a gang of NPCs to render assistance.

Emotional Experience

A player’s emotional experience builds inline with the layers of the framework providing players with learning experiences and memories that persist for the remainder of their gameplay. The game interface presents as a reminder of the player’s gaming journey through mechanisms such as the display of inventory or screenshots significant game events. The avatar also acts as a repository of experience being able to visually display signs of battles won and lost by the clothes it wears or the limp in its step. In addition, a persistent game-world facilitates recall of emotional experience by maintaining a visual diary of the effect a player has had. The emotional experience in gameplay is heightened through the player’s emotional attachment to a NPC (Zagalo et al., 2006). It can be goal-orientated in that the NPC is required to work with the player to complete a task, or empathic through a long-term, growing personal relationship with the character. With respect to game narrative, the emotional experience provided by the story, is reiterated throughout to engage and immerse the player. Through consistent virtual world constructs including a seamless environment and game world lore the narrative can reinforce its experience by building on the past. For games in which the player’s mood is being monitored, the story can be adapted to move player’s from one emotional state to the other.

Each layer and section of the conceptual framework presented herein represents a category of topics, which in the past, has been considered in the research literature, as independent and separate pieces of a the larger picture.

Conclusions and Further Work

Despite terms like emotional behaviour having negative connotations and suggesting irrational conduct affect has always been an embedded part of the human psyche. Examination of the literature selected for this review provides guidelines for further work in this domain. The standout issue is the large number of disparate NPC designs based on the OCC and PAD models. Many present as an overview with pilot studies that have not been succeeded or published. There is also a lack of work being performed on emotional avatar and narrative design. As before mentioned this could be because the avatar is only required to act as a shell to the player’s desires and giving it a will of its own might sever the emotional-proximity. Narrative has a long history of philosophical discussion preceding the invention of computer games and many established practices for eliciting emotion. It is assumed the domain of interactive fiction, not included for study in this paper, might reveal more work in this area.

Of all aesthetic emotion provoking mechanisms, sound is the most dominant reported in the review. This is not surprising as visuals and sounds constitute the aesthetics of computer game interfaces. With the inability of games to provide stimulation for the other human senses, sound has widely been substituted in place of touch. Sounds not only relay information about on screen action but also can be used to suggest a much larger environment that the player cannot see. It has been suggested that sound quality in computer games may be more important than image quality in influencing presence (Skalski & Whitbred, 2010)

Through examination of the pieces that provide a holistic setting for the conceptual framework for affective game design, presented herein, the way in which emotion constructs influence each other in a complete computer gameplay experience becomes clear. Emotions are an integral part of intelligent human decision-making and experience in the real world and should be mirrored in the virtual world to provide the same.

Appendix

Appendix can be found here.

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

Copyright © 2015 Penny de Byl